Microsoft-backed OpenAI said on Monday its safety committee will oversee security and safety processes for the company’s artificial intelligence model development and deployment, as an independent body.

The change follows the committee’s own recommendations to OpenAI’s board which were made public for the first time.

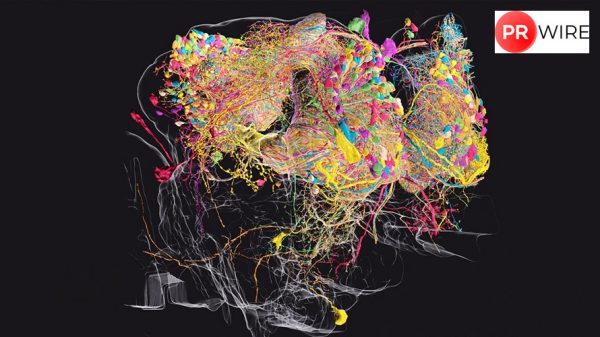

OpenAI, the company behind the viral chatbot ChatGPT, formed its Safety and Security Committee this May to evaluate and further develop the company’s existing safety practices.

The launch of ChatGPT in late 2022 sparked a significant wave of interest and excitement around AI.

The buzz around ChatGPT highlighted both the opportunities and challenges of AI, prompting discussions on ethical use and potential biases.

As part of the committee’s recommendations, OpenAI said it is evaluating the development of an “Information Sharing and Analysis Center (ISAC) for the AI industry, to enable the sharing of threat intelligence and cybersecurity information among entities within the AI sector.”

The independent committee will be chaired by Zico Kolter, professor and director of the machine learning department at Carnegie Mellon University, who is part of OpenAI’s board.

“We are pursuing expanded internal information segmentation, additional staffing to deepen around-the-clock security operations teams,” according to OpenAI.

The company also said it will work toward becoming more transparent about the capabilities and risks of its AI models.

Last month, OpenAI signed a deal with the United States government for research, testing and evaluation of the company’s AI models.

The article originally appeared on Business Standard.